I asked Claude a simple question: "If you were working on a web project, which language and framework would you choose and why? Don't think about me as a developer -- think about yourself as an LLM."

It did not pick Rails.

The LLM's honest answer

Claude picked Python and TypeScript. Not because they are better languages -- but because its code generation quality is directly proportional to how many high-quality examples exist in its training data.

Python and TypeScript dominate open source repositories, Stack Overflow, tutorials, and documentation. When an LLM writes Python or TypeScript, it draws from an enormously deep well of patterns. It makes fewer mistakes, knows more edge cases, and generates idiomatic code more reliably.

About Rails, Claude said something that should concern every Ruby developer: "I can write solid Rails code, but I'm more likely to hallucinate a method name, use a deprecated API, or miss a Rails 7+ convention. The gap isn't huge, but it's real."

I can confirm this from my own experience. I use Claude Code every day to build Rails applications -- this blog, my daily work, side projects. The AI is good at Rails. But it is noticeably better at TypeScript. It hallucinates less, catches more edge cases, and writes more idiomatic code in languages where the training data is deeper.

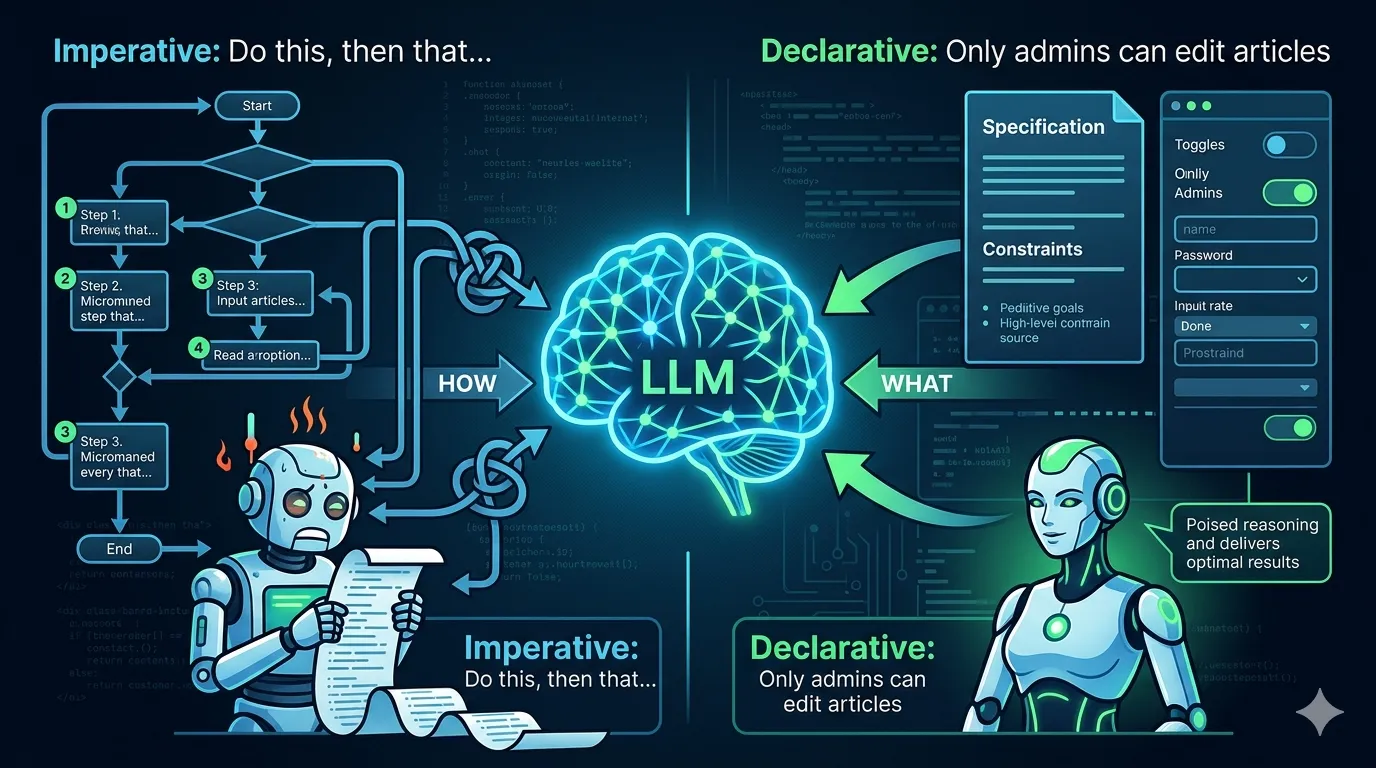

What makes LLMs most productive is not the language itself -- it is how well-typed and explicit the code is. TypeScript beats JavaScript for LLMs because type annotations act as self-documenting constraints. Convention-heavy frameworks like Rails, with all their implicit magic, are paradoxically harder for AI because there is more context the model needs to get right from memory rather than from explicit structure in the code.

I see this every day

Ruby and Rails were designed with a clear philosophy: optimize for developer happiness. Convention over configuration. Don't Repeat Yourself. Make the developer's life easier.

That philosophy was perfect for a world where humans wrote every line of code. But that world is changing fast.

I build with Claude Code daily. I watch it write controllers, models, tests, service objects. A growing percentage of production code -- in my projects and everywhere else -- is being written by AI agents. Claude Code, GitHub Copilot, Cursor, Codex, and dozens of other tools are becoming primary authors of code while developers shift toward reviewing, directing, and architecting.

In this new reality, developer happiness is necessary but no longer sufficient. We also need to think about AI fluency -- how well can an LLM understand, generate, and reason about code in a given ecosystem?

Right now, Ruby on Rails is falling behind on that metric. Not because of any technical shortcoming, but because of a training data gap.

The training data problem

LLMs learn from what they have seen. The more high-quality, diverse, well-documented open source projects exist in a given language, the better the LLM performs with that language. It is that simple.

Look at the JavaScript and Python ecosystems. Thousands upon thousands of open source projects, tutorials, blog posts, and examples. Every possible pattern, every edge case, every architectural decision -- documented and available for training.

Now look at Ruby on Rails. We have some excellent open source projects -- Discourse, Mastodon, GitLab, Solidus. More recently, 37signals made a significant contribution by open sourcing their ONCE products: Campfire (group chat), Writebook (online book publishing), and Fizzy (kanban tracking). These are production-grade Rails applications built by the creators of the framework itself -- exactly the kind of high-quality, real-world codebases that LLMs need to learn from.

But even with these additions, the volume does not compare to what exists in JavaScript or Python. Too many Rails applications remain proprietary, behind closed doors, invisible to training datasets.

This creates a vicious cycle: LLMs perform worse with Rails, so developers building with AI choose other stacks, so fewer new Rails projects get created, so even less training data exists, so LLMs fall further behind.

Rails magic becomes an AI obstacle

The very things that make Rails beautiful for human developers make it harder for AI agents.

Convention over configuration means a lot of implicit knowledge is required. A human Rails developer knows that a model called User maps to a users table, that has_many :posts creates a whole set of methods, that before_action callbacks fire in a specific order. This implicit knowledge is what makes Rails so productive for experienced developers.

But for an LLM, every implicit convention is a potential hallucination point. There is no type signature to anchor to, no explicit declaration to reference. The model has to recall the convention from its training data -- and if that training data is thin, it gets things wrong.

I hit this regularly. Claude Code will generate a method call that looks right but does not exist. It will use a Rails 6 pattern in a Rails 8 app. It will miss a convention that any experienced Rails developer would know instinctively. The model is not stupid -- it just has less to draw from.

TypeScript, by contrast, spells everything out. The types are right there in the code. An LLM does not need to remember conventions -- it can read the constraints directly.

This does not mean Rails needs to become TypeScript. But it does mean the Rails community needs to compensate for this structural disadvantage by flooding the training pipeline with high-quality examples.

What I am doing about it -- and what you can do too

I am not going to write a list of things "we as a community should do" and leave it at that. Here is what I am actually doing, and what I think you should consider.

I open source my work. This blog is a Rails 8.1 application. I have many Ruby on Rails projects in my public github repository. Every controller, every model, every test -- it is all training data. If you have a Rails application, even a small one, even an imperfect one, consider open sourcing it. Every public repository helps.

I write about Rails and AI. You are reading this. Blog posts, tutorials, guides -- they all end up in training datasets. I write about how I use Claude Code with Rails, how I integrate LLMs into my SaaS products, what patterns work in production. Every piece of public Rails content makes AI a little bit better at our stack. Write about how you solved that tricky Active Record query. Document your Hotwire patterns. Explain your Turbo Stream architecture.

I document conventions explicitly. The Rails guides are good, but we need more. When I write a tutorial, I spell out the things that experienced Rails developers take for granted. Instead of relying on "every Rails developer knows this," I write it down. LLMs benefit from explicit documentation of patterns and conventions that humans learn through years of experience. It might sound counterintuitive, but we should write content thinking that LLMs will read it and learn from it so they can teach the developers that use those LLMs.

Build reference applications. The Rails community would benefit enormously from a set of well-maintained, well-documented reference applications covering common patterns -- multi-tenancy, API design, real-time features with Hotwire, background job architectures, authentication flows. Not toy examples -- production-grade references. 37signals started this with ONCE. We need more.

Contribute to Rails itself. The framework's own codebase, tests, and documentation are prime training material. Better inline documentation, more descriptive commit messages, clearer test names -- all of this helps LLMs understand Rails conventions.

This matters more than you think

If LLMs consistently perform better with Python and TypeScript than with Ruby, the next generation of developers -- who increasingly rely on AI assistance -- will naturally gravitate away from Rails. Not because Rails is worse, but because their AI tools work better with other stacks.

I have been building SaaS products with Rails for 20 years. I have watched adoption cycles come and go. When Stack Overflow became the de facto knowledge base, languages with more Stack Overflow coverage gained an adoption advantage. Training data is the new Stack Overflow.

Ruby on Rails gave us an era of unmatched developer productivity. It showed the world that web development could be joyful. That philosophy still matters -- arguably more than ever in a world drowning in over-engineered complexity.

I want to make sure that when an AI agent sits down to write code, it can write Rails as fluently as it writes Next.js. And that starts with us giving those AI agents something to learn from.

Open source your projects. Write about your work. Document your patterns. The future of Rails depends on it.